The Next Zero Trust Problem Isn’t Users, It’s Systems

For the past decade, organisations have invested heavily in controlling access.

They have built identity platforms, deployed multi-factor authentication, and embraced zero trust architectures designed to answer a now familiar question: who is allowed in?

By most measures, this has been a success. The perimeter has dissolved, access is better governed, and identity has become the foundation of modern security.

But while attention has been focused on users, a more consequential shift has taken place elsewhere.

Inside the environment, systems have begun to take control.

In energy networks, sensors feed optimisation platforms that dynamically adjust load. In buildings, automation systems regulate heating, cooling, and power distribution. In industrial settings, machines respond to real-time data without human intervention.

Across sectors, a new pattern has emerged. Systems are no longer just exchanging information. They are making decisions and triggering outcomes.

At the same time, a second shift is accelerating this trend.

The rapid adoption of AI, and increasingly agentic AI, is fundamentally changing how systems behave. Systems are no longer executing static logic in isolation. They are interpreting data, making decisions, and interacting with other systems in increasingly autonomous ways.

They are no longer operating in islands. They are deeply connected, continuously interacting, and increasingly responsible for real-world outcomes.

Yet despite this shift, most organisations have very little control over how these interactions actually occur.

Data flows between systems with minimal validation. Decisions are executed without consistent policy. Actions, often with physical or financial consequences, are triggered with limited visibility into why, or under what conditions.

The risk is not theoretical. It is operational.

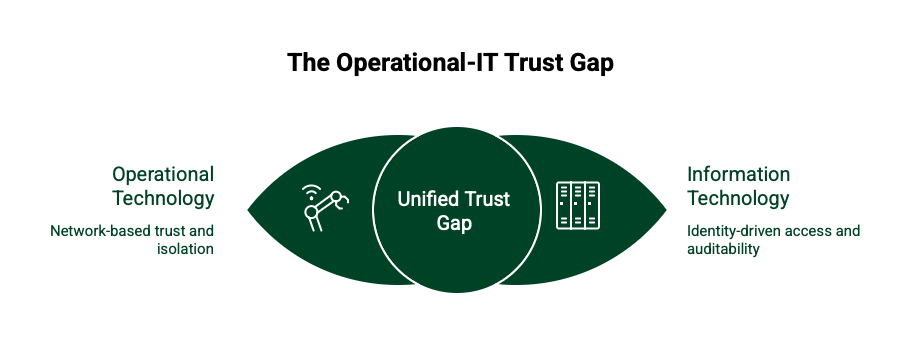

This becomes most apparent at the intersection of operational technology and IT.

Operational environments have historically relied on network trust. Segmentation, isolation, and the assumption that anything within a boundary can be trusted. IT environments, by contrast, have evolved towards identity-driven access, policy enforcement, and auditability.

When these two worlds converge, as they increasingly do, a gap emerges.

It is a gap where systems can influence each other freely, without a shared model of trust.

The industry has not yet caught up with this reality.

Cybersecurity continues to focus on access. Networking continues to focus on connectivity. Even modern zero trust architectures remain largely centred on users and applications.

But the more pressing question is no longer who can log in.

It is this:

Which systems are allowed to influence outcomes, and under what conditions?

Answering that question requires a different approach.

What is needed is not another layer of network control, but a way of governing how systems interact, regardless of where they sit or how they are connected.

This is where a new architectural pattern begins to take shape.

System Trust Architecture introduces an identity-driven layer that sits across existing environments, establishing explicit trust between systems and governing how they communicate, make decisions, and trigger actions.

It does not require networks to be redesigned or replaced. Instead, it overlays a set of distributed trust boundaries that ensure every interaction is authenticated, every action is policy-driven, and every decision pathway can be observed.

In effect, it shifts control away from the network and into the interaction itself.

Trust is no longer assumed because something is on the inside. It is continuously established, enforced, and verified.

The implications are significant.

Organisations gain visibility into how decisions are made, not just where traffic flows. They can define and enforce rules around what systems are allowed to do, rather than simply where they can connect. And critically, they can begin to control how digital systems influence physical outcomes.

This becomes even more important as AI systems evolve.

Agentic systems do not simply respond. They act. They call APIs, trigger workflows, and coordinate with other systems. Without a governing layer of trust and policy, these interactions scale risk as quickly as they scale capability.

As systems become more autonomous, the need for governed interaction becomes more urgent.

Of course, architecture alone is not enough. To be effective, this model requires a way to be implemented, managed, and understood in real environments.

This is where the next layer of capability emerges.

At LDS, this thinking has led to the development of a trust control approach. A practical way of discovering system interactions, mapping how decisions flow, and applying policy across those interactions through a unified interface.

In simple terms, it provides a view of how systems influence each other, and a mechanism to control that influence.

Rather than treating system interactions as opaque or incidental, they become explicit, governed, and observable.

This is the foundation of what we describe as a System Trust Portal. A control layer that allows organisations to see, understand, and govern how systems behave across OT and IT environments.

The direction of travel is clear.

As organisations continue to digitise physical systems, adopt AI-driven decisioning, and connect previously isolated environments, the number and importance of system-to-system interactions will only increase.

The question is whether those interactions remain implicit, or become something that is deliberately designed.

The last decade was defined by securing access.

The next will be defined by controlling influence.

Because in modern environments, the real risk is not who gets in.

It is what systems are allowed to do once they are connected.